First, let’s agree the term “precision” is relative. Forage is a diverse system with an even more diverse set of management strategies. Regardless, every manager should be constantly striving to improve the precision in which nutrients are managed.

The ultimate goal of any precision nutrient management tool should be this: producing the highest quality output (in this case forage) with the least amount of input – ultimately, optimizing efficiencies and maximizing profits.

Within this readership there are those who are soil sampling at a 1-acre resolution and others who have likely not pulled a soil sample in the past decade. For both spectrums we can make improvements – let’s start basic and move forward.

A soil sample should the basis for all nutrient management decisions. Is soil testing a perfected science? No, far from it. However, there must be a starting point.

A soil sample is that first bit of information we can start with and the basic data collection for precision ag to make improved management decisions. When fertilizer is applied without a recent soil sample, it is done based upon pure guesswork. How many other management decisions are made on a farm or ranch by a guess?

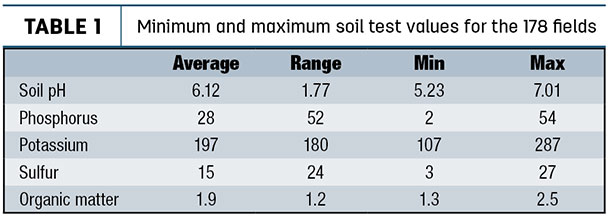

The composite soil sample is a great start, but it is just that – a start. While there are some soils that are very uniform, most are extremely variable. In a survey of 178 fields in the southern Great Plains (Table 1), on average the soil pH was 6.12; phosphorus (Mehlich 3 phosphorus [M3P] and Bray 1 phosphorus [B1P]) was 28 ppm while soil test potassium (STK) averaged 196 ppm.

So on the average, the primary components of soil fertility were okay. However, on average the 178 fields had a range in soil pH of 1.8 units, M3P and B1P both had range of a 52 ppm and STK had a range of 180 ppm.

This data helps support the concept that we should find ways to increase the resolution or decrease the number of acres represented by a single soil sample. Increasing soil sample resolution is typically done using one or two methods – zone or by grid.

Zone sampling

The basis of a zone sample is creating a smaller field. The biggest question with zones is how to draw the lines. There are dozens, if not hundreds, of possible methods, each having their own reasons and benefits. My basic recommendation is that before lines are drawn, goals have to be established. For example, if phosphorus or soil pH management is important, the basis for the lines should be soil-based.

This could be based on soils map, soil texture, slope and on and on. If the target is improved nitrogen management, then the reason for drawing lines should be yield-based. This could be based on yield maps, aerial images, historic knowledge or many soil parameters.

Why does it matter? Two reasons: First, across the broad spectrum of soils and environments, two nutrients are hardly spatially correlated, which means the zone that is best at describing phosphorus variability does an extremely poor job describing potassium variability. Second, more theoretically, the demand for nutrients are driven by different factors.

Phosphorus (a soil immobile nutrient) fertilizer need is driven by the soil P concentration (look up Brays Sufficiency Concept). Many use yield as a parameter for phosphorus application, but this is not a plant need or even a yield-maximizing practice. Fertilizing based on removal is done to prevent nutrient mining. However, nitrogen (a nutrient mobile in the soil) fertilizer need is based on yield and crop removal. Hence, the common land-grant university N and sulfur recommendations are yield goal based.

Grid sampling

To be honest, even the experts disagree on the hows, whys and ifs of grid sampling. I like data, therefore I naturally lean towards grid sampling if the field warrants it. For me, the biggest benefit of grid over zone sampling is soils data from zone samples are biased to whatever parameter was set for the zone and therefore, any resulting map for all nutrients must reflect the original zones.

In a grid, each data point is independent, therefore the maps of each nutrient can be independent, and (the science tells us) in most cases, nutrients are independent of each other.

Ideally, two pieces of information are available for determining whether a field is grid sampled or not. The first piece of information is a yield map from any previous crop. If yield is fairly uniform, I question the need for variable-rate management, much less the expense of grid sampling.

Regardless of the sampling method (zone or grid), the discussion is moot if spatial variability does not exist across the field. However, many forage producers may not have access to this kind of data.

One of the most useful decision-aid tools for grid sampling is the composite soil sample. The reason is simple statistics: A composite sample should be a representative average of the field. If the data is normally distributed, half of the field is above, and half the field is below, the sample average.

So the optimum fields to grid are those in which an input falls at the point in which the benefit of applying is in question, because it suggests that approximately half the field needs the inputs, while the other half likely does not. It is in this scenario that the return on investment can be greatest.

As with pH, for example, fields with a very low value should have a flat broadcast application and should be sampled again at a later date. Fields with a composite pH well above 6.0 will unlikely have enough acres needing lime to warrant sending out an applicator.

Is grid sampling a lifelong activity? No. The initial activity of grid sampling will provide both an indicator of the variability level and overall needs of the field. From that point, decisions can be made and actions taken. Identify the greatest limiting factor in the field based on the samples and focus on impacting change upon it. Zone sampling in subsequent years can be utilized to document change.

When that issue is resolved, move to the next factor. It may require grid sampling again or using the original grid to develop new management zones. For instance, if the greatest issue first identified on the field is soil acidity, then after the soil pH is neutralized, the field should be grid sampled again. The reason for this is changing soil pH will influence many nutrients, and the amount of change is not consistent, but dependent upon many other factors.

In precision ag we tend to look at layers, yield, soil, etc. However, none of these tell the whole story independently. An area in a field may have moderate soil fertility and be underproducing.

Using the data collected, the decision may be made to increase inputs; yet, the issue is a shallow, restrictive layer limiting production. Therefore, the extra inputs will be of no benefit and could even further reduce production. It is at this point I like to bring out the importance of “getting dirty.” There is no technology that can take the place of “boots on the ground” agronomy.

For producers who have historically performed intensive soil sampling, there is still room for improvement. Soil testing and nutrient management is not an exact science; in fact, it was originally built for broad sweeping, statewide recommendations. As technology advances and inputs can be applied at sub-acre resolutions, all of the environment (weather, soil) by genotype inactions becomes more evident.

The next step in precision ag is to develop recommendations by upon-site, specific crop responses. This is where nutrient response strips can further improve nutrient use efficiencies and crop production. In Oklahoma, nitrogen-rich strips are applied across fields (grain and forage) to determine in-season nitrogen needs.

Having a strip in the field with 50 to 100 extra units of N acts as a management tool which takes into account soil, environment and plant need. If the strip is visible, the field or zone needs more N; if it is not visible, then the crop is not deficient and at that point in the season does not need more N.

Producers have taken this approach for N and adopted it for P and K, with strips across the field with a zero and high rate of either nutrient. After a few seasons, responsive and non-responsive zones are developed, and P and K applications are managed accordingly.

One misconception of precision ag is the end result should be a field with uniform yield from one corner to the other. This is often not the case; in fact, in many cases the variability in production across the field can be increased.

Theoretically, precision ag is applying inputs at the right rate in the right place. This means areas of the field which are yield-limited due to underlying factors which cannot be managed, have a reduction in inputs with no effect on yield.

In other words, with variable-rate technology, bad areas of the field will never yield more; however, there will be areas of the field where yield can be increased. This means the difference in the yield from the lowest to highest point increases.

Maybe before variable-rate technology, the field ranged from 4 to 6 tons per acre; now, with variable-rate technology, it goes from 4 to 7 tons per acre (widening the gap).

Regardless of where a producer currently sits on the technology curve, there are potential ways to increase productivity and efficiency. There is nothing wrong with taking baby steps; it is often the simple things that lead to the greatest return.