LOW DIET ACCURACY DECREASES PROFITABILITY High feed prices and volatility due to market and supply chain disruptions caused by the COVID pandemic are restating the importance of maximizing feed use efficiency.

Diet accuracy is one management factor that can improve feed efficiency. In this context, we define accuracy of the delivered diet as the alignment in nutrient composition of the formulated diet and the diet delivered to the feedbunk. Low accuracy of delivered diets increases the risk of underfeeding and overfeeding cows due to high uncertainty and inconsistency of the nutrients delivered to the bunk and available to the cow. Underfeeding and overfeeding cows can decrease milk yield, increase nutrient waste, and increase the risk of health issues that affect the use efficiency of dietary nutrients. Low accuracy of formulated diets can result from poor mixing management and ingredient composition variability. However, better mixing management practices and better understanding and management of ingredient variability can improve diet accuracy. For example, optimizing sampling practices will identify important changes in feed composition and enable timely adjustments to diet formula to minimize the risk of underfeeding or overfeeding cows. Production and feed efficiency losses due to diet variability may seem small when considered for an individual cow, but these losses add up, and improved diet and feed management can lead to real savings. For example, in a simulation study, St-Pierre and Cobanov (2007) found that implementing optimum sampling practices in a 1,000-cow farm could decrease the costs related to the changes in forage composition by $250 a day.

IMPACTS OF MIXING AND INGREDIENT COMPONENT VARIATION

Ingredient loading error, loading order, mixing time, mixer blade and kicker plate condition, and mixer scale accuracy are key sources of variability introduced during the mixing process (Mikus, 2012, Trillo et al., 2016). Good maintenance protocols and record-keeping will help to maintain accuracy of the delivered diet and prevent unnecessary losses at the feedbunk. Feed ingredients contribute to the variation of the delivered diets in proportion to the square of the inclusion rate and the degree of nutrient variability in the ingredient. Byproducts have the highest level of nutrient variability, followed by forages, and grains have the lowest levels. Due to the high inclusion rate (40 to 60 percent), forages often account for the largest proportion of diet nutrient variation and thus are the focus of an on-going study to develop management protocols to minimize the impacts of forage nutrient variation.

UNDERSTANDING AND MANAGING FORAGE NUTRIENT VARIABILITY

Our project includes three main goals:

1. Improve understanding and quantify the factors that influence variability

2. Optimize sampling practices for farm-specific conditions

3. Develop a tool to guide implementation of optimized practices and monitor forage nutrient composition

During the summer of 2020 and spring 2021, we collected corn silage and haylage samples at harvest and feedout from eight New York dairy farms with three silage storage methods (bunker, bag, and drive-over pile). During harvest, we collected samples from each truck load delivered to the silo and composited samples for every 15 to 20 acres within each field. We recorded the location within the bunkers and silo bags of the forage from each field, the weather conditions during growth and at harvest, and the soil type and texture of each field. During the spring of 2021, we collected two independent corn silage, haylage, and TMR samples at feedout, 3x per week for a period of 16 weeks, from the same eight N.Y. dairy farms sampled at harvest. We recorded the weather conditions on the day of feedout and identified the fields-of-origin of the forages fed that day. We used a mixed-model analysis for harvest and feedout datasets to identify the most relevant factors causing variation in the nutrient composition of corn silage and haylage.

Unsurprisingly, the mixed model analyses identified field as the highest source of variation in forage nutrient content during harvest and feedout. This suggests that reformulating diets when forage from a new field is fed could improve diet accuracy. Therefore, we estimated the average field-of-origin feeding time for each silage type at each farm and used those values as inputs to an optimization algorithm. Using this method, our estimates for the average stable time of forage nutrient composition for corn silage and haylage ranged from four to 18 days, which is a much shorter time frame than the 30 days suggested by St-Pierre and Cobanov (2007) and varies with the farm size and silo type. If true, the shorter stable periods suggest that forage composition changes more frequently than previously suggested, which will impact diet accuracy and associated costs.

OPTIMUM SAMPLING PRACTICES AT DAIRY FARMS

To illustrate the influence of the expected stable time, we found optimal sampling practices for corn silage and haylage on a small (100 milking cows) or large farm (1,200 milking cows) with either bunk or bag silos using the renewal reward model and genetic algorithm suggested by St-Pierre and Cobanov (2007) to minimize the Total Quality Cost. Total Quality Cost refers to the cost related to sampling and changes in forage components. It includes the costs of labor associated with sample collection and reformulation, sample analysis costs, and expected losses in milk production or increases in feed costs due to underfeeding or overfeeding. To optimize the sampling methods, we found the number of samples to collect, sampling frequency, and acceptable limit of variation before diet reformulation for each combination of management practices that minimized the Total Quality Cost.

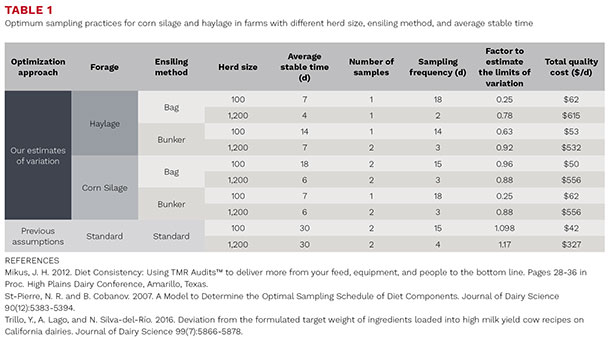

Consistent with results from St-Pierre and Cobanov (2007), our analysis suggests different sample numbers, sampling frequency, and tolerable level of variation minimize the Total Quality Cost for farms of different sizes and different expected variation in forage nutrient composition (Table 1).

Also, in alignment with previous reports, the recommended number of samples ranged from one to two samples, and farms with a greater number of cows benefit from more frequent sampling. However, our results suggest that smaller ranges of acceptable variation are needed to minimize the Total Quality Cost associated with forage nutrient variability. In practice, this recommendation means that a forage monitoring and diet reformulation protocol would be more sensitive to smaller changes in corn silage and haylage nutrient composition. The Total Quality Cost estimates from our approach to quantifying the expected forage variability through average stable time are higher than estimates from St-Pierre and Cobanov (2007). These higher costs are a result of increased sampling and lab analysis costs due to smaller tolerable level of variation and expected higher frequency of overfeeding and underfeeding.

MONITORING FORAGE COMPONENTS VARIABILITY

Nutritionists and farm managers can use the recommended sampling practices produced from the optimization method under development to monitor forage nutrient composition with x-bar charts. This tool can help nutritionists and farmers detect abrupt changes in forage components and determine if the changes warrant action. The optimal limits of variation for each farm provided by our algorithm can be used as inputs to set the upper and lower limits for the allowable change in a forage component. When a sample analysis indicates that a forage component exceeds the acceptable level of variation, the industrial process control methods recommend taking another sample to verify the result and exclude the possibility of sampling or laboratory error. If a second sample analysis confirms the abrupt change in forage composition, the recommended action is to adjust the diet formulation. However, to ensure that process control recommendations translate effectively to dairy cattle diet formulation and delivery, our next steps will be to implement our proposed sampling and diet management protocol in a commercial farm setting and measure changes in diet accuracy and milk production. If confirmed, we will implement the protocol optimization method and x-bar chart in a decision support tool to help guide forage management and sampling and diet reformulation timing.

|

This article appeared in PRO-DAIRY's The Manager in March 2022. To learn more about Cornell CALS PRO-DAIRY, visit PRO-DAIRY. |